Just trying to find out, if I have a docker volume, is there a way to manage the files within it without using the CLI, like a filemanager similar to cPanels file manager

Thanks to @sid he showed me a way to log into my docker machine via SFTP

On a mac, download the free Cyberduck from https://cyberduck.io/

Create a new bookmark

Server: 111.111.222.100 (Change to the IP Address of the Docker machine)

Username: root

Password: BLANK we will use an SSH Key File

SSH Private Key: ~/.docker/machine/machines/Target Name from Wappler/id_rsa

Try Connect, it should come up with a security alert about an Unknown Fingerprint, which you accept, and then connect to the /root folder.

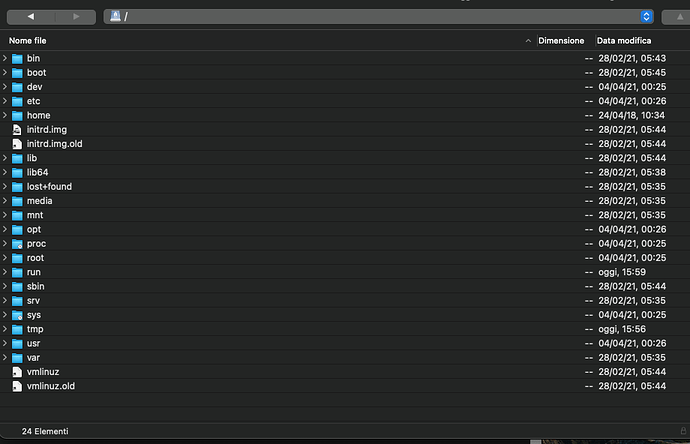

Go up a directory to /

Then navigate to /var/lib/docker/volumes/ProjectNameFromWappler__TargetNameFromWappler_user_uploads/_data/

And now you will be able to manipulate your user uploaded files directly through FTP

thank you @psweb to shared this … I was looking for it too…

By the way Cyberduck supports also S3!

For Windows, one can use https://winscp.net/.

You might have to convert the RSA key in that case to PPK via PuttyKeygen. Not sure about it though.

In my case, WinSCP imported connection details from PuttySSH, so its using PPK.

In one of the server I don't find in the docker folder the folder: ProjectNameFromWappler__TargetNameFromWappler_user_uploads/_data/

in another I find the folder but don't find website files

That sounds a little odd.

So at least with Digital Ocean the path starts like this

/var/lib/docker/volumes/

The next part is variable depending upon whatever your Project Name in Wappler is, so for me my Project Name is MyTestProject which it changes to mytestproject all in lowercase

Then it adds 2 underscore characters __ and joins on the Name of the Target as set in Wappler, so mine is MyTestServer which again it changes to mytestserver all in lowercase, lastly it adds the following to the end of the name _user_uploads.

The final result of that one name is mytestproject__mytestserver_user_uploads

Inside that directory is a folder called _data making the final path your user uploaded files

/var/lib/docker/volumes/mytestproject__mytestserver_user_uploads/_data/

A few things you should check are, in Wappler Project Settings, in the first tabe, General, have you set a User Uploads Folder mine is set to /public/travel-images

If that is set then still in Wappler go to >_Host Server SSH which is on the bottom right, Wappler should login to your hosting accounts server in an SSH session.

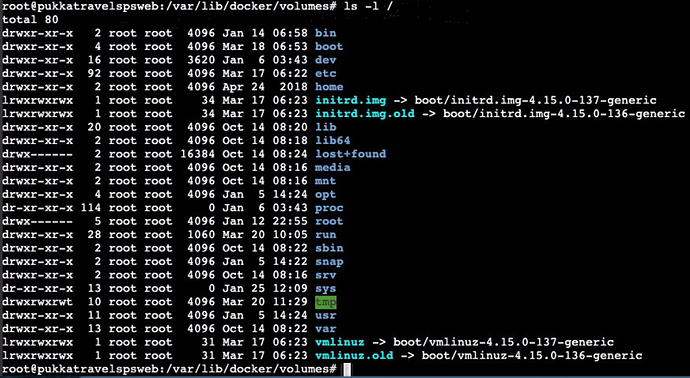

Once connected try the following

ls -l /

You can see my

var folder there, so now just carry on working up one directory at a time to see where things are missing, so next command get a directory listing for the folder /var/

You should find your path somewhere, good luck

That is why I advised Brian to go for S3 when you have user assets and docker. You really don’t want to have those in the docker images as those are also rebuild frequently.

Hello Paul

i am trying to connect to the server to manage file manager

I have a nodejs site on Docker / Hetzner, I downloaded CyberDuck Mac Version, but I can’t figure out exactly how to set it up.

- SFTP (SSH File Transfer Protocol)?

- Server = IP and port of the Hetzner server?

- User = root

- Password = blank

- SSH Key = with dropdown menu where do I find it?

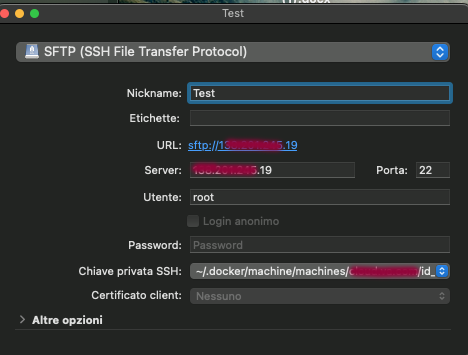

Hi Marzio, here are my settings as I use it.

Connection Type: SFTP (SSH File Transfer Protocol)

Nickname: Whatever You Want

Server: IP of your Hetzner Docker Machine - Port: 22

Username: root

Password: Blank

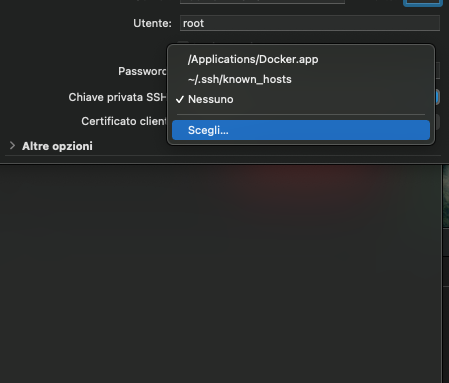

SSH Private Key: Choose... Then see below.

For the SSH Private Key, you need to direct Cyberduck to the file named id_rsa which is automatically created when Wappler does the remote connection to your Docker Machine, the file is stored inside a hidden folder on your machine, so you need to toggle on Show Hidden Files to see it.

To Toggle On/Off hidden files on a Mac use CMD+SHIFT+. and to turn it back off just do the same again. The last character is a DOT/Period/Full Stop or whatever you call that locally.

Once you can see hidden files navigate to your Home directory ~/

In there you should see a folder structure like .docker/machines/your_remote_target_name/id_rsa

So the full path should be ~/.docker/machines/your_remote_target_name/id_rsa

Your Home Directory, as I refer to it is your Mac user account, so yours is probably marzio I assume, and can be found in your main Hard Drives root directory, and open Users/marzio/

I followed your instructions and in fact I entered a list of files, but I still did not understand the functionality and especially the usefulness

Tomorrow I study on it calmly

Of course I really miss a real remote file manager

Hello Paul

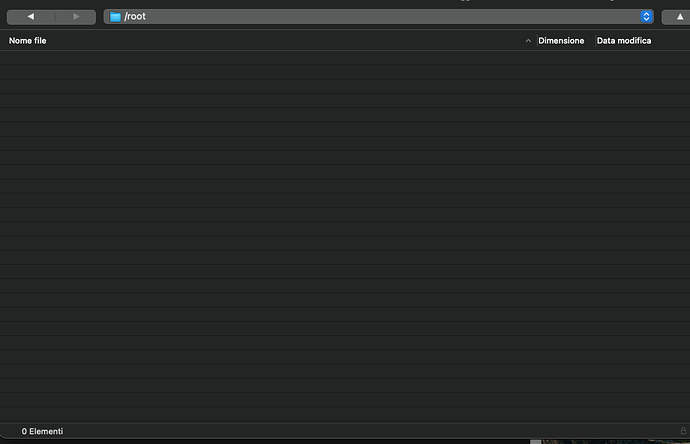

I tried the settings again and it connects, but the / root directory is empty

![Schermata 2021-04-08 alle 16.02.20|690x444]

![Schermata 2021-04-08 alle 16.02.20|690x444]

You should be able to see the docker stuff at this path.

question is: it is good practice save images you need to be persistent inside docker image? or would bebetter using S3 or block storage ?

I don’t know S3, but I seem to have understood that with Hetzner S3 server it is not possible

My requirement is:

1- First of all being able to upload folders with product images (related to existing records in the database) to the server

2- Being able to manage the images (present only remotely) with back-end (insert, update and delete)

3- know if by remote deployment, the images not present locally are not deleted

It is considered a good practice to keep user uploaded/generated files on a separate server - own server or S3 kind of service.

This helps with security and portability from what i understand.

I am not very good with Docker. Have just worked on 1 project.

@Hyperbytes might have answers for you here.

The situation with docker is that when you deploy your site to a droplet the entire site is overwritten. If you have file/ images uploaded then they will also be overwritten/ deleted.

The ability to set an uploads folder in the target does provide some flexibility but I found it became problematic

The solution is to use S3 storage such as DO spaces which provide a permanent store for them although i guess you could use and server as long as you can upload to it and retrieve from it. D.O. spaces also provide the ability to set up your own CDN and deliver fast from an edge cache. One D.O. S3 space can be shared between multiple sites so it is quite cost effective

I don’t understand anything anymore

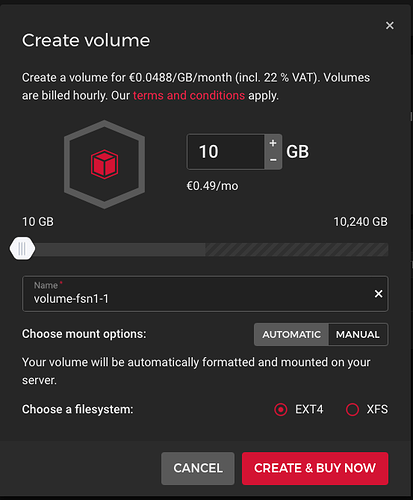

With Hetzner I have to buy 20 GB of volume

Then S3 or Cyberduck?

Is this what it takes to manage uploads (pdf and images)?

But if every upload I delete all the images that I do not have locally, then it is a disaster

I’m very confused

Sorry, never used Hetzner so can't comment on costs etc but basically you should use an S3 option regardless.

You can use any S3 provider with Hetzner. I use https://cloud.ionos.com/storage/object-storage for example. But you can also use the built in S3 providers in Wappler.

You don‘t have to use the storage options from your docker provider.